Image recognition, especially, facial recognition is nothing new to casual users of smartphones and social networks. Many social networks including Facebook has been using facial recognition to suggest the names of “friends” while tagging newly uploaded photos. With increasing confidence values provided by modified algorithms, apps like Facebook Moments now boldly suggests that you send photos to recognised friends who feature in them. Extensive research work is being carried out in the domain of image recognition by academics and companies to further this kind of applications. In a paper titled “DeepFace: Closing the Gap to Human-Level Performance in Face Verification”, Taigman et. al. have proposed an approach for face recognition in unconstrained images which performs remarkably with an “accuracy of 97.35% on the Labeled Faces in the Wild (LFW) dataset”. That is almost at the brink of human level accuracy!

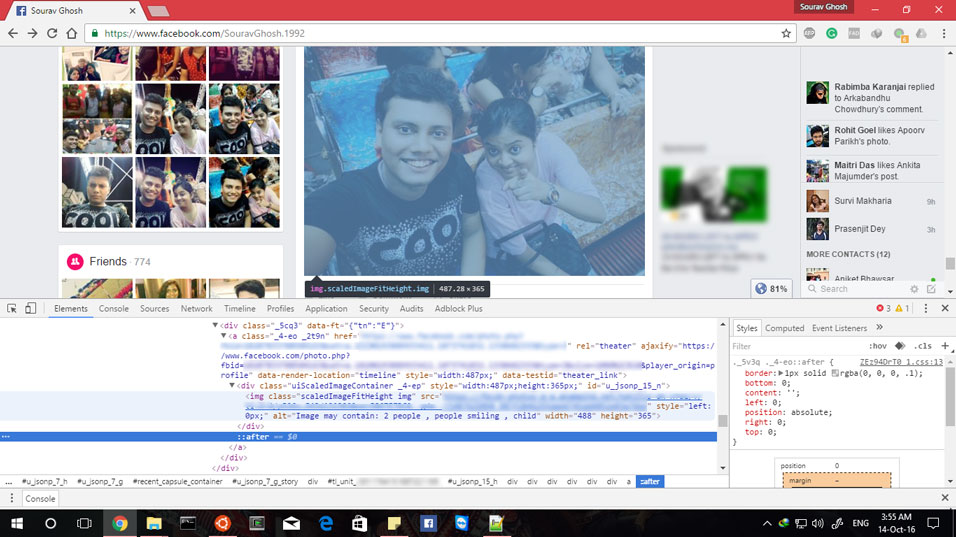

In this article, I am going to informally evaluate a new application Facebook is putting its algorithms into. A few months ago, Facebook announced that to aid the perception of photos by differently abled users who have impaired eyesight, it is going to add detected features in the alt-text of images which can then be used by text-to-speech applications to describe the image contents. Continue reading “Facebook employing Image Recognition in Accessibility: An Informal Evaluation”